It’s Not a Marketing Problem

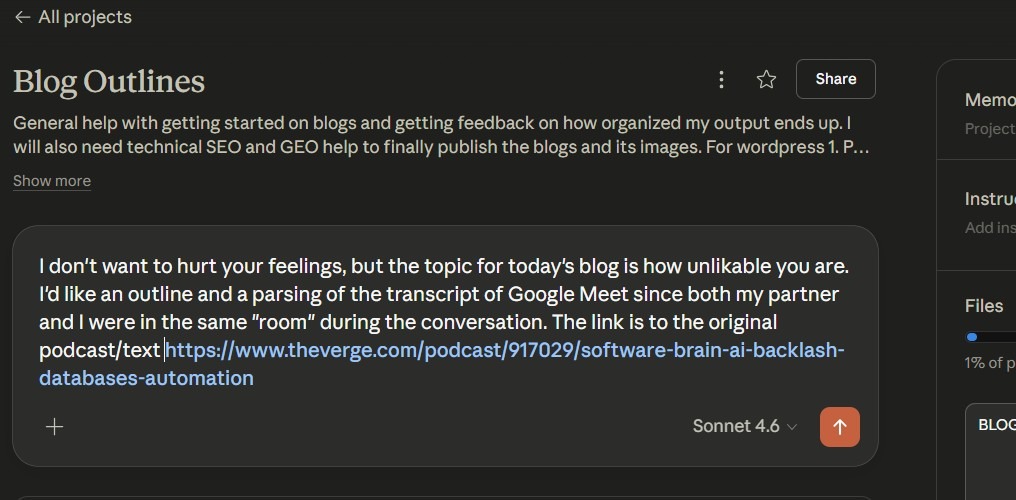

When Dan and I sat (or in my case groan loudly with a shoulder injury) to listen to a recent Verge podcast (Decoder by Nilay Patel), I was thinking mostly about how I actually needed to take time away from “work”–a very slippery term when you work from home–to clean the house in preparation for my mom to arrive and spend the week here. But the boss insisted the pod was short, and now, here I am, writing a blog with an icepack on my shoulder and tear-crust in my ducts, resigned to the fact that my house will not be in properly decluttered condition for an honored houseguest–my mother.

I guess I’m lucky I don’t have the most judgy of tiger moms.

What proceeded was a really interesting bite-sized taste of something I feel in my bones but repress daily for the sake of my work. And that is that most people in America HATE AI.

(This isn’t a new topic for us. Not even the first time Dan and I have riffed on the topic from a podcast.)

Dan and I started this business with the thought that only humans can make the world better, and we can help humans whose life calling is world-betterment with technology. We don’t try to sell some folks’ vision of an AI first company. Our work focuses greatly on the various risks to using AI (and there are plenty!) My fellow career teachers are generally skeptical of computational technologies; some are still doing everything they can to reskill such cognitive treasures as “not using Wikipedia” and “handwriting.”

Dan’s background is in statistics and machine learning, so of course he has the clearest vision of how AI works, can work, and ought to work. But even he has many reservations.

We do think AI can help social impact organizations survive and for social impact itself to be more, well, impactful. We’ve seen it happen. Our business mission isn’t to ride on hype’s coattails, but to do what all businesses ought to do…be helpful. And the very basis of our marketing strategy is fairly prototypical: we want people to know, like, and trust us.

And what do our potential clientele do? Use LLMs ALL THE TIME. They actively use it, or at least can’t help it, and can’t help taking advantage of its uses. 900 million people a week use ChatGPT.

So people know, they like. But they very forcefully don’t trust. AI’s basically the Flaming Hot Cheetos of productivity. Its biggest fans know exactly where to go in the convenience store when they crave it, and they’re willing to pay the price, but their digestive tracts say something else.

We learned from this podcast that, while slightly more favorable than the Iran War 😬, AI is less liked in American society than ICE (to be fair, there’d be no ICE without AI)–only 26% of people favor AI. Sam Altman, whose own approval rating is 21%, admits readily that if AI were a politician (26%–so rare to have an institution score higher than a human), it would have the worst approval ratings in history. Even with all of their capacity for empathy in question, just about all tech billionaires confess to seeing AI’s harm to society to some degree.

There’s plenty there not to like: alienation from the human affectations that make us feel human, hallucinations, environmental harms, the fact that it’s basically Skynet dressed up like Inspector Gadget.

So what were the most useful takeaways from this podcast?

- Software brain: This is basically a lens of seeing the world that’s common among tech workers. All it is? Data. Databases. Loops. Now with AI, people conform to a software, and all signs fearfully point to technocracy.

- Tech workers are the new lawyers. They play societal chess with specific data and precedent. Both law and the functionality of code are hegemonic and firm, but not completely static, and this potentiality is where the profit lies, and where the rest of society is likely to be cut out.

- The tools, and maybe even many of the software brains themselves, are clearly not what’s unpopular, so much as the profiteers deploying the worldview. Board members wanting headcount cuts but calling it “augmentation.” Workers asked to upskill without getting any reward: financial, time back, or otherwise. We relate, working for a company that teaches AI, but we don’t trust the people who make AI possible. I like to think I’ve been rewarded with time and a wage increase, but how long-term will this reward last?

- AI adoption will fail when leaders won’t name what’s scary. Workers know the company wants cuts but leaders are asking for unearned trust via changes that they can’t predict. AI skills are ultimately needed less than leadership skills in an AI age, something that’s probably not being pressed in MBA programs.

- The social impact organizations we see can’t get away with lying to the staff like companies that are shareholder-first. We are absolutely convinced that aligned AI in one of these organizations can unearth so much promise for society’s betterment. But it’s not because AI is magic, or seemingly so sure of itself. Humans are still the ones that make the world better! But those who serve their needs and the needs themselves? More fragile than ever.

Now we get to the space where my Claude project generated outline, one I mostly ignored, encourages me to close with a call-to-action. Unfortunately, we don’t have a workshop that solves existential problems and threats of AI, but we are working on a ton of products to help folks figure out where they are:

We still have a lot more work to do, of course. In principle, we hope to bring more humanity to organizations who undulate in the push and pull of shadow AI users and a priori abstainers. We need more discussion, less instruction. More leaning into uncomfortable uncertainty.

[In this post, we used AI for polish, not purpose]

Leave a Reply